How FS Studio built a synthetic imagery pipeline that eliminated field testing for a leading agricultural robotics manufacturer

The fields didn’t exist. There were no tractors idling at the edge of rows, no agronomists kneeling in the soil, no early morning dew on leaves catching the light. And yet, somewhere inside a simulation, a robotic system was learning to see.

This is the story of how a leading agricultural technology manufacturer stopped going to the field — and started going further, faster.

The Problem with Reality

The company built machines that worked the land so farmers didn’t have to. Robotic platforms. Computer vision systems. Automated equipment designed to move through crops with precision. But developing and refining that technology came with a frustrating dependency: you needed real crops, real fields, and real conditions to test anything.

Field testing is expensive. It’s seasonal. A missed window means waiting months. And when you’re trying to optimize a vision system across dozens of different crop types, densities, and mounting configurations, the logistics quickly become impossible. The team knew there had to be a better way. What they lacked was the expertise to build it.

That’s when they brought in our team.

How the Teams Worked Together

From day one, FS Studio treated this less like a vendor engagement and more like a shared build. The two teams established a working rhythm that kept communication tight without creating overhead.

Every week, the teams met face-to-face (or screen-to-screen) for structured check-ins. These weren’t status updates for their own sake. They were working sessions: a chance to review new builds, walk through visual output together, and collect the kind of immediate, in-person feedback that’s hard to convey in writing. Seeing a simulation frame side-by-side with a real crop photo and hearing that density isn’t right or the shadow angles are off in real time was far more efficient than rounds of written back-and-forth.

Between those sessions, communication moved to the client’s preferred messaging platform, keeping the async feedback loop fast and frictionless. Questions got answered the same day. A developer could flag a rendering issue in the morning and have a decision by afternoon. Nothing sat in an inbox waiting for the next meeting.

This structure — synchronous rhythm plus async flexibility — meant that when problems surfaced, they got resolved quickly, and the project kept moving.

Project Objectives

The engagement was defined around a clear set of goals, agreed upon early and revisited throughout:

- Develop a simulation system capable of generating photorealistic synthetic imagery representing the perspective of the robotic vision system at variable mounting angles and heights

- Optimize and validate multiple robotic platform configurations against a range of crop types and density conditions

- Source, prepare, and integrate high-fidelity 3D models of crops, weeds, and the robotic platform itself

- Build custom shaders to balance rendering performance with visual realism

- Ensure the environment met the quality bar required for genuine R&D utility, not just a demo, but a working tool

Deliverables

By project close, FS Studio delivered:

- A fully functional simulation environment built on Unity’s DOTS framework, capable of rendering high-density crop and weed scenarios at scale

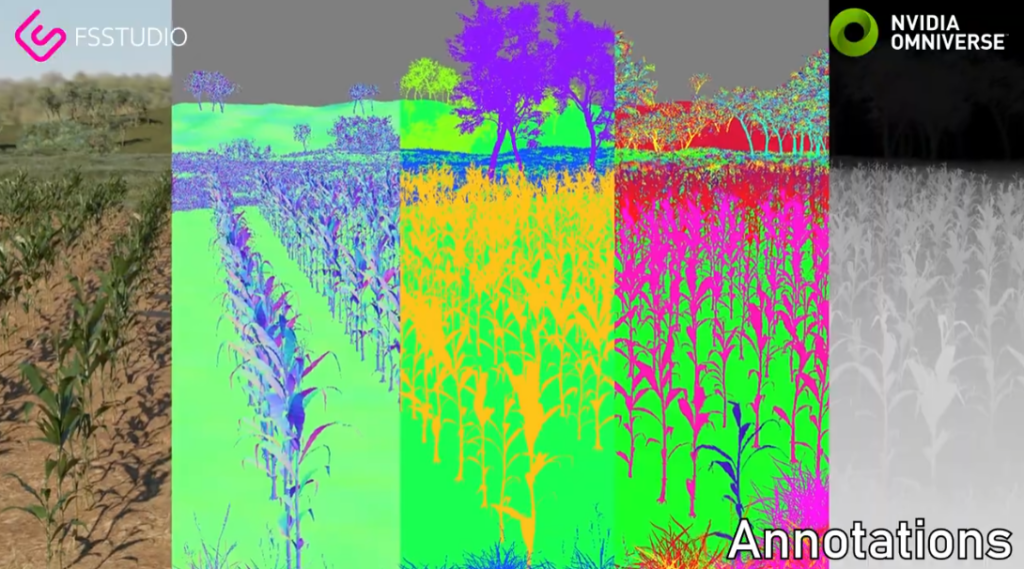

- A synthetic image generation pipeline producing accurate visual representations of the machine vision system’s perspective across multiple configurations

- A complete library of prepared 3D plant and weed models, optimized with texture atlases for consistent performance

- An animated 3D model of the client’s robotic platform, integrated into the simulation environment

- Custom-built shaders tuned specifically for the project’s performance and fidelity requirements

- Documentation and handoff support enabling the client’s internal teams to operate and extend the tool independently

Building the Invisible Farm

With the relationship and scope established, the real work began, and it was harder than it sounds.

One of the first major challenges was density. Real agricultural fields are chaotic. Crops and weeds grow in overlapping, competing tangles, and any simulation that felt sparse or artificial would defeat the purpose. To render those environments faithfully without grinding the system to a halt, the team turned to Unity’s DOTS — the Data Oriented Technology Stack — a cutting-edge framework designed to handle exactly this kind of computational complexity at scale.

Plant and weed models had to be sourced, then painstakingly prepared for consistency and visual fidelity. Texture atlases were built to keep performance high without sacrificing realism. A full 3D model of the robotic platform itself was acquired and animated so the simulation could show the machine in motion, not just standing in a field.

Then there were the shaders. Custom-built for this project, they sat at the intersection of performance and realism — making sure the synthetic imagery didn’t just look close to real, but close enough to be genuinely useful for training and testing a machine vision system.

What Came Out the Other Side

When the simulation was complete, it delivered everything the client had asked for. The synthetic imagery accurately replicated what the equipment’s vision systems would observe in the real world — different crops, different densities, different perspectives. Engineers could now explore configuration after configuration without booking a single field visit.

But something unexpected happened. Word spread inside the organization.

What had been built as a product development tool started attracting interest from other corners of the company. Training teams saw it as a way to onboard new employees — to walk people through the technology visually and show them, concretely, how the systems worked. It became a demonstration platform. A communication tool. A way of making something abstract suddenly tangible.

The simulation had grown into something larger than anyone had originally scoped.

The Bigger Picture

The results were measurable: faster research cycles, lower development costs, a competitive edge in a field where the pace of automation is accelerating. But the more lasting achievement was a shift in how the company approached the challenge of building for the real world.

By building a faithful simulation of the farm, they had found a way to learn from it without being bound by it. The seasons no longer dictated the R&D calendar. A configuration that might have taken months to test in the field could now be evaluated in days.

FS Studio’s contribution wasn’t just technical — it was a reframing of the problem. The question was never how do we test in the field more efficiently? The real question turned out to be: what if we didn’t have to?